Facebook is building a visual cortex to better understand content and people

/Manohar Paluri, Manager of Computer Vision Group at Facebook ©Robert Wright/LDV Vision Summit

Manohar Paluri is the Manager of the Computer Vision Group at Facebook. At our LDV Vision Summit 2017, he spoke about how the Applied Machine Learning organization at Facebook is working to understand the billions of media content uploaded every day to Facebook in order to improve people’s experiences on the platform and connect them to the right content.

Good morning everyone. Hope the coffee's kicking in. I'm gonna talk about a specific effort, an umbrella of efforts, that we are calling ‘building Facebook's visual cortex.’

If you think about how Facebook started it was people coming together, connecting with friends, with people around you. And slowly through these connections using the Facebook platform to talk about things that they cared about, to upload their moments, whether it's photos. Some may not be the right thing for the platform. Some, obviously moments that you care about. Slowly moving towards video.

This is how Facebook has evolved. The goal for applied machine learning, the group that I'm in, is to take the social graph and make it semantic. What do I mean by that? If you think about all the notes there, the hard notes are basically what people actually interact with, upload, and so on. But those soft notes, the dotted lines, are what the algorithms create. This is our understanding of the people and the content that is on the platform.

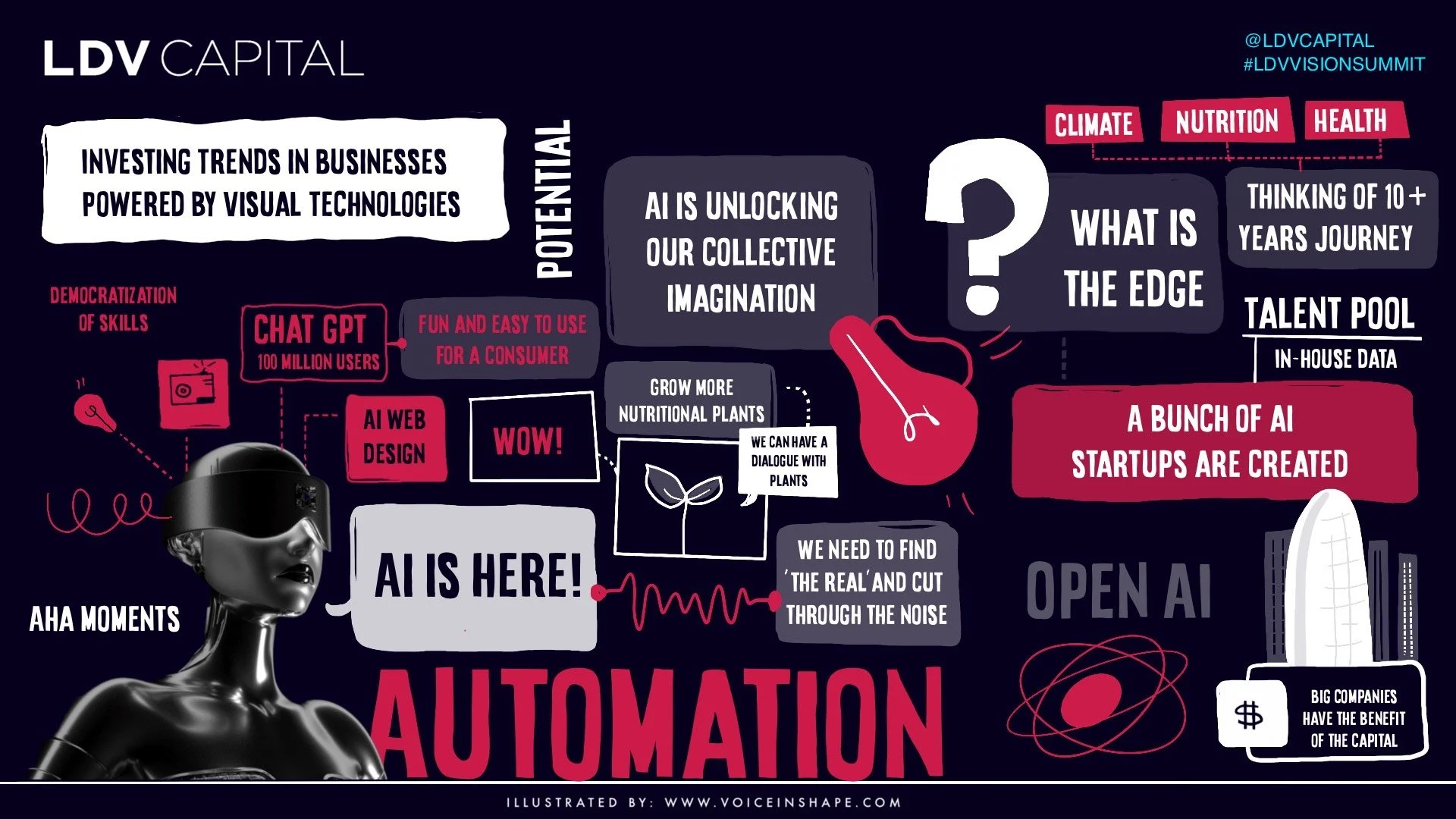

LDV Capital invests in people building visual technology businesses. If you’re building a unique visual tech company, we would love for you to join us. Through our annual LDV Vision Summit and monthly community dinners, we bring together top technologists, researchers, startups, media/brand executives, creators, and investors with the purpose of exploring how visual technologies leveraging computer vision, machine learning, and artificial intelligence are revolutionizing how humans communicate and do business..

This is useful, and thinking about this in this way is scalable because whatever product or the end technology or experience of your building now have access to not only the social graph, but also the semantic information. So you can use it in various ways. This is something that has actually revolutionized the use of computer vision specifically.

Now, if you take a step forward, most likely the last thing that you liked on Facebook is either a photo or a video. When I started in 2012 we were doing a lot of face recognition, but we've started moving beyond that. Lumos is the platform that kind of born out of my internship. So I'm super excited because Lumos today processes billions of images and videos. It has roughly 300-odd visual models that are being built by computer vision experts and general engineers. They don't necessarily need to have machine learning and computer vision expertise. It uses millions of examples. Even though we are making significant process on supervised and unsupervised learning, the best models today are still fully supervised models.

Now, going through a very quick class, the state-of-the-art models today are deep residual networks. Typically what you do is you have a task. You take this state-of-the-art deep network trained for that task. Takes a few weeks, but if you have distributed training then you can bring it down to hours. If you have a new task, the obvious baseline is to take a new deep net and train for the new task.

But, think about Facebook, and think about billions of images and hundreds of models. They don't necessarily multiply and it's not feasible. So what do you do? The nice thing about deep networks are their hierarchical representations. So things that are hierarchical representations, the below parts of the layers, the lower parts of the layers are generalized representations, and the top parts of the layers are specific for the task. Now, if you are at Facebook and you have a task, you should be able to leverage the compute on billions of these images again and again for your task.

So with Lumos, what people can do, is actually plug at various parts of the layers that suits them. And they make the trade off between computer and accuracy. This is crucial to scale to all the efforts that we are doing for billions of images. Now, as the computer vision group, we might not understand the implications of a loss of accuracy or making something faster because of accuracy. But the group that is building these models know this very well. So with Lumos they are able to do this in a much more simpler manner and in a scalable way.

What is a typical workflow for Lumos? You have lot of tools that allow you to collect training data. One of the nicest things of being at Facebook is you have a lot of data, but it also comes with a lot of metadata. It could be hashtags. It could be text that people write about, and it could be any other metadata. So you can use lots of really cool techniques to collect training data. Then you train the model and you have this control on the trade off between accuracy and compute.

You deploy the model with the click of a button, and the moment you deploy the model every new photo and video that gets uploaded now gets run through your model without you doing any additional bit of engineering. And you can actually refine this model by using active learning. So you are literally doing research and engineering at scale together every day. And you don't need to be an expert in computer vision to do that.

Here is a series of photos that come in and get classified through Lumos, and the concepts that are built through the Lumos. Obviously you can only look at certain portion because we get an image every four minutes.

Lumos today powers many applications. These are some of them. A specific application that I thought would be interesting to talk here is the population density map estimation. So here what happened was connectivity labs cared a lot about where people live so that we can actually provide connectivity technology, different kinds of it, whether it's an urban area or a rural area. So what did they do? They went to Lumos and they trained a simple model that actually takes a satellite file and says whether it's a house or not. And they apply it billions of times on various parts of the world.

Here is a high resolution on the right side that we were able to generate using this Lumos model. And they didn't have to build any new deep net. They just use a representation of one of the existing models. If you apply this billions of times you can detect the houses. And if you do it at scale ... This is Sri Lanka. This is Egypt. And this is South Africa. So based on the density of where people live, you can now use different kinds of technology, connectivity technology whether it's drones, satellites, or Facebook hardware installed in urban areas.

...What Lumos is trying to do, it's actually trying to learn a universal visual representation irrespective of the kind of problem that you are trying to solve.

If you think about what Lumos is trying to do, it's actually trying to learn a universal visual representation irrespective of the kind of problem that you are trying to solve. At F8, which is the Facebook developer conference, we talked about Mask R-CNN. This is the work that came out of Facebook research where you have a single network that is doing classification, detection, segmentation, and human pose estimation.

Think about it for a minute. Just five years ago if somebody had told you that you have one network, same compute, running on all photos and videos, that would give all of this, nobody would have believed it. And this is the world we are moving to. So there is a good chance we'll have a visual representation that is universal.

Here are some outputs, which are detection and segmentation outputs, and when you actually compare it to ground rule, even for smaller objects, the ground rules and predictions of the algorithm actually match pretty well, and sometimes we cannot distinguish them. And taking it further you can do segmentation of people and pose estimation. So you can actually start reasoning what activities people are engaging in in the photos and videos.

Now, the way we are moving, as rightly pointed out before, is the understanding and this technology is moving to your phone. So your device, what you have, it's pretty powerful. So here are a couple of examples where the camera is understanding what it's seeing whether it's classification of the scene and objects or it's understanding the activities of the people. This is Mass To Go, which is running at few frames per second on your device. To do this we took a look at the entire pipeline, whether it's the modeling or the runtime engine, or the model size.

Taking it a step further, the next frontier for us is video understanding. Here, I'm not gonna play the video, but rather show you the dashboard that actually tells you what is happening in the video. Here is the dashboard. We use our latest face recognition technology to see when people are coming in and going out. We use the latest 3D connect based architectures to understand actions that are happening in the video. And we understand the speech and audio to see what people are talking about. This is extremely important. Now, with this kind of dashboard we have a reasonable understanding of what's happening in the video. But we are just getting started. The anatomy of video is much more complex.

So how do you scale this to 100% of videos? That is non-trivial. We have to do lot of really useful things and interesting things to be able to scale to 100% of videos. You're doing face recognition and actually doing friend tagging. The next one is actually taking it a step further, doing segmentation in video, and doing pose estimation. So we are able to understand people are sitting, people are standing, talking to each other, and so on, with the audio.

That is basically the first layer of peeling off the onion in the video, and there's a lot more we can do here. Now another step that we are taking is connecting the physical world and the visual world. As rightly pointed out we need to start working with LIDAR and 3D data. Here, what you see is the LIDAR data. That is actually we are using deep net to do semantic segmentation of this three-dimensional LIDAR data and doing line of sight analysis on the fly.

We brought down the deployment of Facebook hardware to connect urban cities from days and months to hours, because we were able to use computer vision technology. I have only ten minutes to cover whatever we could do, so I'm going to end it with one statement. I really believe to be able to bring AI to billions of people you need to really understand content and people. Thank you.