Physical AI Can’t Exist Without Eyes: Why Visual Tech Is the Real Engine Behind the Machines

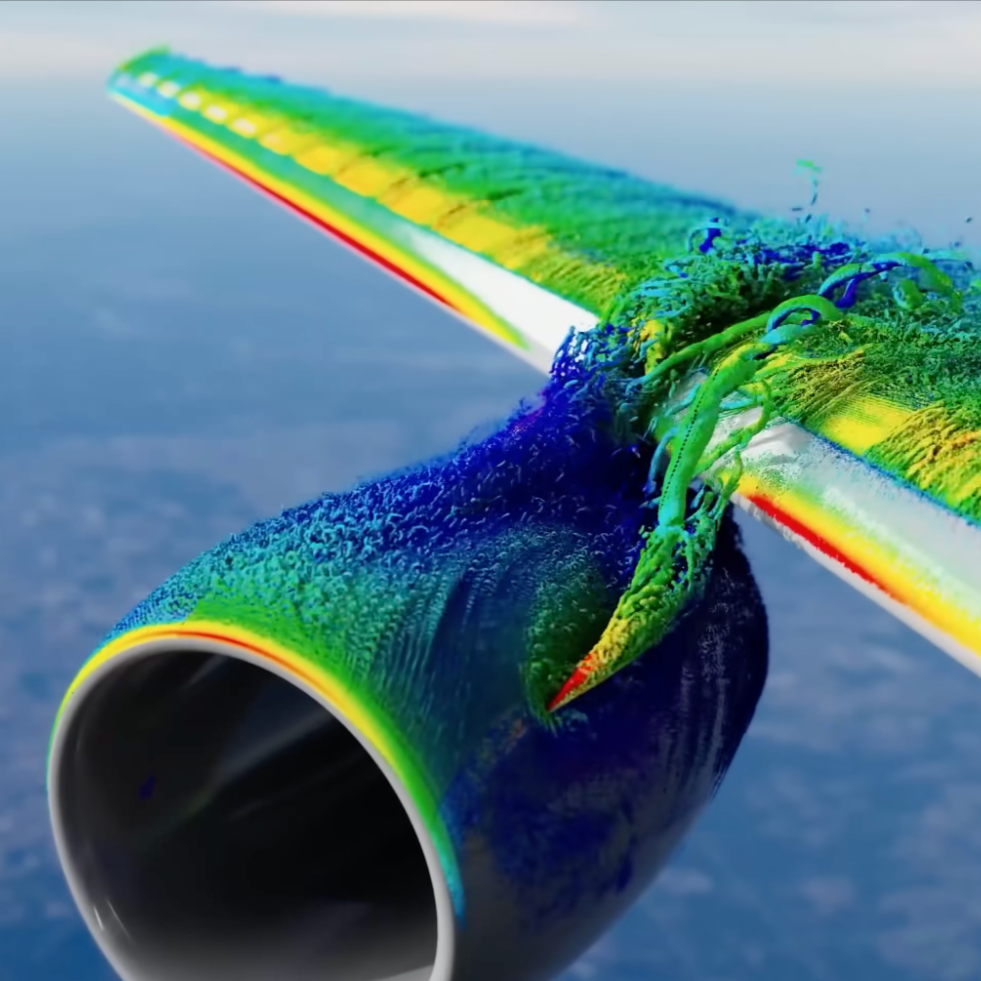

/Every robot, drone, self-driving car, delivery bot, and surgical assistant that claims “intelligence” is fundamentally a product of visual and/or electromagnetic perception systems. The success of Physical AI doesn’t start with neural networks or GPUs – it starts with cameras, sensors, and spectral scanners that give machines their first sense of reality.

Physical AI will not succeed until machines can truly see.

Read More