Computer Vision Will Empower Financial Investors To Make Millions, Advertisers Know When We Are Happy or Sad, and Help Doctors Improve Our Health!

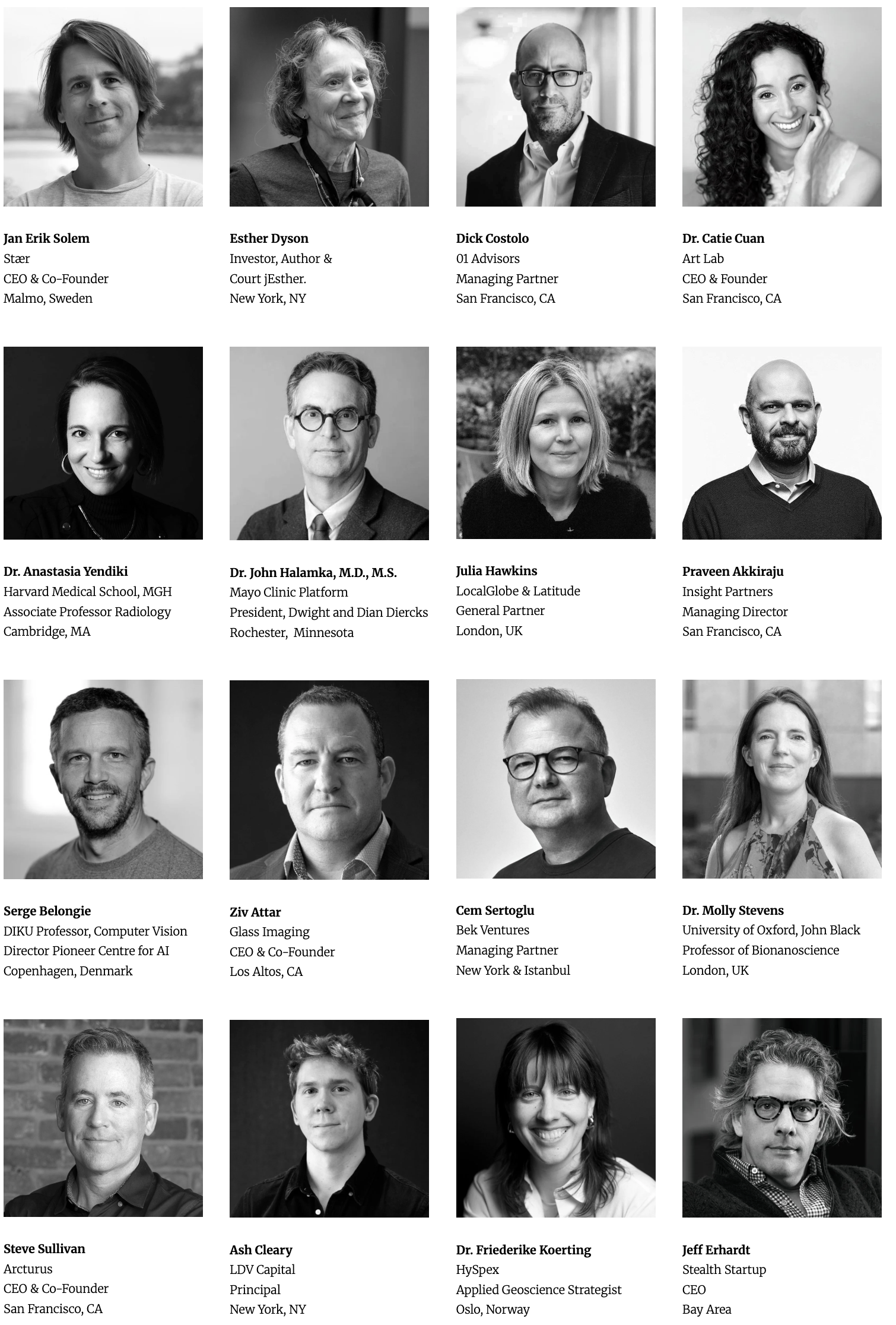

/Every year, we bring together an impressive lineup of speakers – from tech giants to under-the-radar startups, esteemed research labs and leading venture capital firms. Come join our 12th Annual LDV Vision Summit on March 11, 2026.

Satellite imaging analysis of container count in a shipping port delivers trends on Importing & Exporting trends. ©Skybox Imaging

The analysis of content via computer vision and artificial intelligence is no longer a discussion of select few in universities. These brilliant scientists and entrepreneurs are building the foundation of new technology companies that will disrupt major billion and trillion dollar markets, impacting humanity forever. I have been building companies in and tracking the visual technology ecosystem for about twenty years, including eighteen years of building startups in this sector from Silicon Valley, New York, and Europe. For the last three years, I have been tracking and investing in the Visual Technology ecosystem via our fund LDV Capital.

The visual technology ecosystem includes any business or technology that creates or leverages visual content in consumer or business verticals. Some verticals of the ecosystem are capture, storage, filtering, search, e-commerce, tagging, distribution, augmented reality, virtual reality, new cameras, mapping, content identity management, video analysis, visual games, e-commerce, content marketing, satellite imaging, medical imaging, and entertainment, among others.

Significant markets are going to be empowered or disrupted by computer vision and artificial intelligence. These include the financial, medical, advertising, fashion, security, manufacturing, agriculture, logistics, and entertainment markets among others.

Visual content from many sources have been around for years, but finally computers are starting to analyze content exponentially faster, with increased accuracy and more real-time data. Even more exciting is the ability of machines to understand complex signals from visual content, including economic trends to emotion, intent, feelings, and desires.

Here is a high level overview of several major markets that are being disrupted by computer vision and artificial intelligence. Listen to computer vision experts speak at our next LDV Vision Summit.

Financial Investors See Real-Time Economic Market Trends from Satellite Imagery to Deliver Tremendous Value Creation

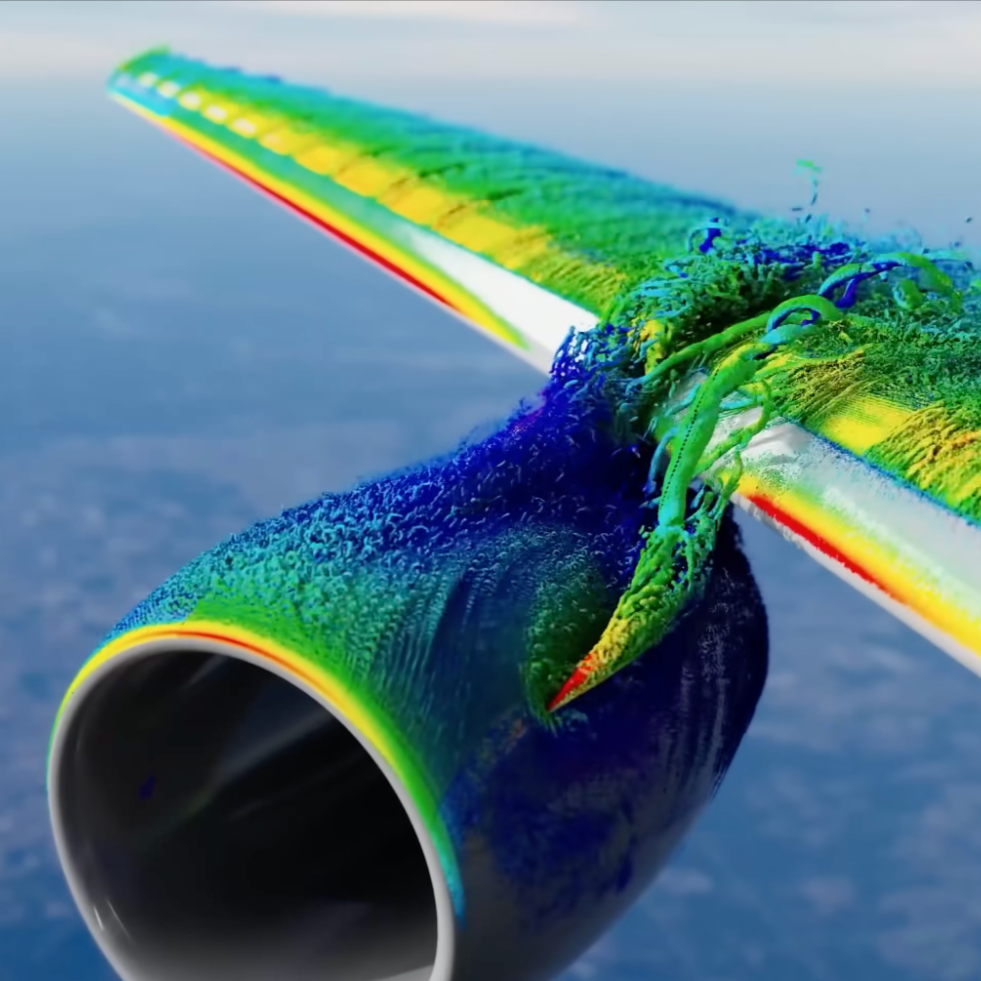

From Wall Street to international traders and hedge funds to sovereign wealth funds, we will have more accurate access to real-time global economic trends from satellite imagery. Images from satellite have been available for years, but adequate real-time images with global coverage have not existed. Because enough data is becoming available in real time and computer vision advancement is progressing exponentially, investors, competitors, and governments will be able to analyze in real time the status of world oil reserves, agriculture yields, shipping port traffic, global economic growth and more. Accessing satellite imagery is easier these days, but key factors delivering differentiation and value will be latency, accuracy, and efficiency to analyze the valuable signal to noise in petabytes, exabytes, and soon yobibytes of data.

Financial investors will now be able to track real-time shipping traffic in ports exponentially faster than via analog methods. @Skybox

This would not be economical or efficient without leveraging computer vision, artificial intelligence, and recent cloud computing infrastructure advancements. This process to analyze global market trends will quickly surpass legacy methods used for tracking economic trends today.

Commodity traders are able to track health of agriculture crops and expected yields. ©NASA

Skybox Imaging, Planet Labs, and Orbital Insight, among others, are in a race to visualize and better understand Earth from space. Skybox was acquired by Google last year for $500 million, Plant Labs recently raised $20M from the IFC (a member of the World Bank Group), and Orbital Insight recently raised nearly $9M in funding led by Sequoia. Skybox and Planet Labs are sending satellites with different strategies for quality and costs, but the goal is to visually index Earth from space. Orbital Insight is focused on understanding global trends through advanced image processing and data science. For this vision to become a reality, there first needs to be exponentially more high-quality capture devices in space, increasing the capture of real-time images and then leveraging computer vision and artificial intelligence to analyze the content.

Orbital Insights analyze international oil reserves via shadows with computer vision & artificial intelligence. ©Orbital Insights

Medical Businesses Will Become More Effective, Efficient and Less Expensive via Computer Vision and Artificial Intelligence

Medical businesses, such as those dealing with disease diagnosis, decreasing outpatient care visits, and bone transplants, are being empowered and disrupted by leveraging computer vision and artificial intelligence. To date, it has been very hard to make advancements in enabling computers to analyze medical imagery files because there has not yet been a large enough data set of relevant images to analyze. Zebra Medical Imaging recently raised $8M in funding led by Khosla Ventures. They are building a database of anonymous medical images so that computerized diagnoses and analyses of images are possible.

It is amazing to think that patients go to the doctor for their regular mammogram or heart checkup for years and that the images from each visit are analyzed mostly by humans. It’s exciting to think about the potential for computer vision techniques and artificial intelligence to analyze the visual trends of millions of patients’ mammogram images, CT scan and other x-rays. In doing so, hopefully, they will be able to identify diseases more accurately and earlier than is currently possible today. By looking at hundreds or even thousands of historical images of such patients much earlier, anomalies can hopefully be found sooner, leading to a more efficient disease diagnosis.

Many feel it is time to change healthcare. Decreasing follow-up doctor visits is also a major opportunity in the medical industry and could save patients and insurance companies a ton of money. Captureproof is trying to reduce in person follow-up visits with doctors. They are helping medical providers monitor, triage, and consult colleagues through their mobile and web services. We all have heard our doctors say, “Take two aspirin, and call me in the morning.” However, now doctors can tell their patients, “Take two photos, share them with me, and I will text you in the morning.”

Another company pushing the envelope with 3D modeling, computer vision, and stem cell extraction is Epibone. Using 3D customized bone transplants via imaging and stem cell extraction, Epibone wants to enhance people’s lives with personalized implants via a 3D model from the patient’s CT scan.

©Epibone

Advertisers Increase ROI by Leveraging Computers That Know When We Are Happy, Sad, or Angry

Computers can watch videos and tell us what they are seeing via artificial intelligence. Computer vision knows when we are happy, sad, or angry. Why do we care? Emotions predict our attitudes and actions. Content creators, brands, and publishers can now collaborate with companies such as Affectiva, Emotient, and RealEyes to better monetize and target their content by analyzing the sentiments of faces. This is a tremendous opportunity to learn about the customers’ state of mind as they emotionally respond to marketing, product, content, and service experiences. Advertisers can learn if their billboard advertisement in Times Square or their advertisement on their YouTube channel is making people happy, curious, perplexed, confused, sad, or angry. Gathering people together for focus groups while executives and marketers sit behind the one-way mirror will become a legacy process. Focus groups can now be done anywhere there are cameras and people.

Soon, we will be able to analyze all of the people’s expressions in the Vatican Square in Rome via satellite images in real-time.

Sentiment analysis knows when we are happy, sad, confused, perplexted and excited. ©Emotient

Content creators leverage sentiment analysis to better understand when guests are happy, sad, or angry on Late Night with Conan. ©Emotient

Not sure if this a good or bad thing, but there is definitely endless potential. Remember when we didn’t know we wanted a mobile phone with GPS, two cameras, an accelerometer, and constant Internet access? Now we cannot live without it and I think computer based sentiment analysis will be the same for businesses.

Affectiva delivers their AFFDEX score which empowers advertisers. ©Affectiva

The revenue potential is tremendous for accurately, automatically, and efficiently keywording all of the video in the world.

One of our LDV Capital portfolio companies called Clarifai offers a service that leverages artificial intelligence and deep learning to understand the content in a video. Computer vision and A.I. will analyze video and deliver exponential monetization opportunities for companies with content assets. Video assets will be more easily searchable, monetized and distributed where contextually relevant. Content creators, brands, advertisers, and publishers will also be able to add a contextually relevant advertisements alongside the exact frame in a video with a multi-racial family driving a BMW with the roof down along the coast while laughing at the youngest child’s jokes. Finding this exact frame manually is easier if someone is reviewing a 1-minute video. However, finding all of the frames with a desired group of keyword signals in billions of hours of video footage would not be efficient, accurate, or affordable for any platform without leveraging computer vision and artificial intelligence.

Clarifai automatically associating keywords to a videos via their computer vision and A.I. neural network. ©Clarifai

Computers Know What Entertainment Is Relevant for You

Content is being created every day from traditional media companies, modern media brands, bloggers, YouTube stars, and our grandparents. Where do we want to get our content from, and why is it contextually relevant? There used to be one or two major newspapers in a city, but now there are thousands and thousands of news sources for local and international news. There used to be only a couple of TV channels on our black and white TVs with the antenna that we had to hold to improve the signal. There are tremendous challenges and opportunities facing original content creators today.

There is an explosion of digital video. Verizon FiOS Custom TV launched a new service to compete with Netflix, which is competing with HBO, which is competing with YouTube, which is competing with Amazon Prime, which is competing with Apple TV, which is competing with NBC among others. Will these centralized platforms win, or will platforms such as VHX.TV or GBox empower video creators to better market, distribute, and monetize their content, all while building a community? For example, the VHX community has made over $5 million in gross sales on over 4,000 content titles.

There has been discussion for years about when our personal A.I. would accurately review the content on the web and only deliver the most contextually relevant content to you. Maybe my personal A.I. will also deliver 5% serendipitous content that will inspire me but that might not be something that I would typically enjoy. When will this happen? When will my personal A.I. be accurate enough to entertain me and make me happy? Do we want this to happen? I do.

Analyzing Human Activity via Artificial Intelligence Will Increase ROI for Retailers

Analyzing live video streams with computer vision can help retailers in many ways. What if you are trying to choose between three new locations for your next retail store on Oxford Street in London? Each location varies in costs and square footage, but frequently, the most important aspect is whether the right demographic of potential customers will be walking on the street near their future store.

Are your ideal customers walking around the store in the morning, during lunch, after work, on weekend afternoons, or during late evenings? Will they be business people racing to work who are less likely to walk in to buy clothes, or will they be teenagers about 12-17 years old who are your perfect target customers? Historically, you would have to gather research reports from disparate sources and probably hire people to go stand outside those locations to take notes every day for weeks. Prism Skylabs and Placemeter are two companies working working to solve these problems for Retails among other sectors. Placemeter who last fall raised $6 million dollars led by New Enterprise Associates. Placemeter creates a real-time data layer about places and streets by automatically extracting measurable data from a live video stream, such as the number of people walking into a store and how heavy traffic is on a specific street.

©Placemeter

© Prism SkyLabs

My Personal A.I. Delivers Customized Products And Says I Should Have More Clothes And Furniture With The Color Burgundy

Computer vision knows what colors are best for your furniture, makeup, and clothes. A startup called Plum Perfect is a visual recommendation engine which drives personalized advertising and e-commerce. It leverages computer vision technology to analyze your photos and help you personalize your look in seconds.

©Plum Perfect

There are several new companies that believe computer vision can exponentially increase clothing commerce. On-demand customization of clothes is another exciting use of digital imagery and computer vision that will exponentially empower and/or disrupt the fashion world. For example, Volumental believes that customized tailored products will become the norm, rather than a luxury.

Volumental and Scarosso are working on custom italian shoes. ©Volumental

It uses computer vision algorithms for shoes today. It believes that eyeglasses, custom shirts, suits, medically required products like shoe insoles, and any wearable product could benefit from a precise fitting.

The orthotic and insole market sector is estimated to be in the billions and growing. This is another vertical in the medical industry that will probably be disrupted by data science that analyzes visual images and other technologies like 3D printing. One company working in this space, SOLS, leverages images and deep learning algorithms to create 3D-printed custom orthotics. It also combines insights from the images along with information about the patient’s body, lifestyle, and medical needs to generate the custom orthotics.

3D scanning of feet for 3D printed custom insoles from Sols. ©SOLS

I am excited to see how computer vision and artificial intelligence improve our lives.

However, I definitely do not have all of the answers! Hopefully we can all learn from over 80 international experts in Visual Technology who will be presenting at our next LDV Vision Summit.